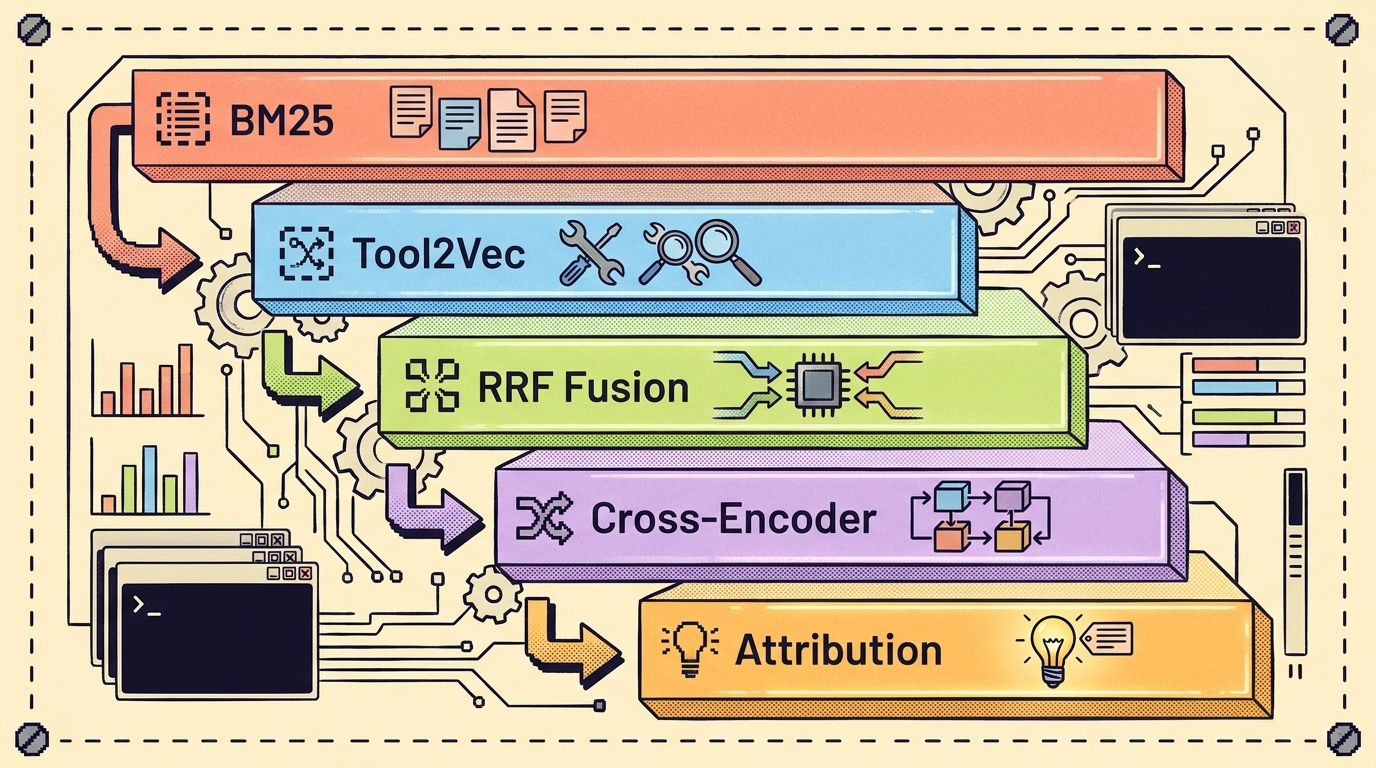

Five stages. One pipeline. Every caller goes through it.

The Skill Matching Cascade: How WinDAGs Picks the Right Expert

We replaced keyword matching with a five-stage retrieval cascade — BM25, Tool2Vec, RRF fusion, cross-encoder rerank, attribution k-NN. Here's why each stage exists, what it costs, and how to tell which one is doing the work.

You ask your AI to "design a paginated GraphQL endpoint that won't fall over at scale." It reaches for the textbook answer — LIMIT and OFFSET. Which works fine, right up until your dataset hits a million rows and every page-2 request starts scanning the whole table.

What you wanted was the cursor-pagination expert. What you got was the API generalist. Both exist in the catalog. The matcher picked the wrong one, and now you're three hours into the wrong implementation.

This is the most important thing WinDAGs does, and the part that took us four tries to get right. Find the right specialist for the task in front of the user — out of 503 of them — fast enough to feel instant, and accurate enough that the agent's first answer is the one a senior engineer would have written.

This is the version that finally stuck. Five stages. One pipeline. Every search goes through it.

Why not just embeddings? Or BM25? Or a bandit?

Every approach below is one we tried and grades a real query against. Click any row to see what it returned and where it falls down.

Report Card: Five Approaches to Skill Retrieval

PASS RATE: 1 of 5

GRADER: SR. ENGINEER

cursor-pagination-graphql— the skill an experienced engineer would reach for.The lesson sitting under all of these: skill selection is a retrieval problem, not a bandit problem. You're trying to find the most relevant document given a query. The day we re-framed it from "learn an exploration policy over fungible arms" to "rank documents," the whole thing shrank from "open research question" to "BM25 plus a re-ranker, we know how this goes."

The cascade

Five stages, in order. Earlier stages are cheap and high-recall. Later stages are expensive and high-precision. Each stage uses the previous stage's output and either re-ranks it or blends with it.

Every caller goes through the same SkillSearchService.search() method — the DAG executor, the MCP server, the slash skill, the shortlister. One index, one pipeline, one scoring path. That used to not be true and the bugs were terrible.

Stage 1: BM25 with Porter stemming and bigrams

BM25 is the floor. It's the algorithm that powers Lucene, Elasticsearch, and almost every working search engine on earth. We use it for one reason: when the query and the skill share concrete vocabulary ("postgres", "stripe", "supabase"), BM25 finds them instantly with no cold start, no embedding generation, and no API calls.

Two refinements matter:

- Porter stemming. "Optimizing" matches "optimization." "Connections" matches "connection pool." Without stemming, the tail of relevance falls off fast.

- Bigrams. "React Native" and "Node React" are not the same skill. Single-token BM25 can't tell them apart. Bigram features fix it.

Cost: 5 ms over 503 skills, all in-process. No network. No keys. This stage alone is what makes the MCP server work with zero configuration.

Stage 2: Tool2Vec — embedding what the skill is used for, not what it is

The earlier embedding-based approach embedded skill descriptions: "An expert at designing REST APIs..." That's what the skill is. The query, on the other hand, is what the user wants: "Design a paginated endpoint for our search results." Those live in different semantic spaces. Cosine similarity between them is unreliable.

Tool2Vec (SqueezeAI Lab) flips it. For each skill:

- Ask Haiku: "Generate 15 diverse task descriptions that would require this skill."

- Embed each of the 15 with

all-MiniLM-L6-v2(local, 384-dim). - Average them. That's the skill's vector.

Now the query and the skill vector live in the same space — the space of task descriptions. Cosine similarity becomes meaningful. This is the part that made us go "oh." Once you see it, you can't un-see it: every embedding-based retrieval system that compares queries against descriptions is doing dot products between vectors that don't belong on the same chart. Tool2Vec just… puts them on the same chart.

The Tool2Vec paper claims +27% Recall@K over description-based embeddings. We see similar gains in practice. Cost: ~$0.001 per skill via Haiku × 503 skills = ~$0.50. One time. Cached forever, keyed by content hash, auto-invalidates when a skill changes.

Stage 3: RRF fusion

We have two ranked lists now: BM25 and Tool2Vec. Each is good at things the other is bad at. BM25 nails exact terminology. Tool2Vec catches "this task is like this skill" without shared vocabulary. Combining them is the obvious move.

Reciprocal Rank Fusion is the obvious method. For each candidate, sum 1 / (k + rank) across both lists, where k is a small constant (we use 60). It's parameter-light, it doesn't require calibrating two scoring scales, and it just works.

If BM25 puts postgresql-optimization at rank 2 and Tool2Vec puts it at rank 4, the fused score is 1/62 + 1/64 ≈ 0.0317. If a candidate only appears in one list, it still contributes — but ranks worse than candidates that appear in both. That's the property we want: agreement between channels is signal.

Stage 4: Cross-encoder rerank

By stage 3 we have a top-20 that's pretty good. The cross-encoder pushes precision higher.

A bi-encoder (Tool2Vec) embeds the query and each skill independently and compares. Fast, scalable, but limited — the query never "sees" the skill during encoding. A cross-encoder takes (query, skill) as a single input and runs the transformer over the joint pair. It's a much better signal of "does this skill match this query." The catch: it's O(N) per query, where N is the number of skills you rerank. We only rerank the top 20.

We use cross-encoder/ms-marco-MiniLM-L6-v2. Local. ~200 ms for 20 pairs on CPU. No API.

Stage 5: Attribution k-NN — learn from your own history

The first four stages know nothing about whether the skills they pick actually work. That's where attribution comes in.

Every time /next-move runs and you accept/modify/reject the prediction, every time a graft happens and the agent succeeds or stalls — those events get logged to ~/.windags/skill-state.db (SQLite). Each row records:

- The query (task description) and its embedding

- The skill that got picked

- Five separate signals: did it complete, did the user accept it, did downstream nodes rate the output, did the agent self-report high confidence, was the work actually shipped

For a new query, we find the K most semantically similar past queries (k-NN in embedding space, K=20 by default) and ask: of those, how did each candidate skill perform? Skills with strong historical performance on similar tasks get a small score bump. Skills that historically failed on similar tasks get demoted.

This is not a bandit. We are not exploring vs. exploiting. We are using historical performance as a re-ranking prior, which is what bandits should have been doing the whole time.

The data is private. It lives on your machine. There is no telemetry pipeline. (Yet — if there ever is one, it will be opt-in.)

What it costs

PER-QUERY LATENCY (LOG SCALE)

The cold-start cost is concentrated in Tool2Vec generation (a few minutes, $0.50, once). After that, everything is local and fast.

If you don't have any of the optional stages built — no Tool2Vec cache, no cross-encoder model, no attribution data — the cascade falls back to BM25-only and still works. That graceful degradation matters: the plugin ships BM25 today and the rest light up as their data and models are present.

Tell me which stage chose this skill

Every result returned by SkillSearchService includes a breakdown field — a string describing which stages contributed and what their scores were. That's how we debug bad picks:

postgresql-optimization

bm25=12.4 (rank 1)

tool2vec=0.78 (rank 2)

rrf=0.0309

cross-encoder=0.91 (rank 1)

attribution-knn=+0.04 (8 historical wins on similar queries)

final=0.95

If the cross-encoder is upranking a skill BM25 was lukewarm on, that's interesting and probably correct. If the cross-encoder is downranking something BM25 loves, also interesting and worth a look at the skill's description for ambiguity.

This breakdown is exposed through the MCP — windags_skill_search returns it. So when an agent asks why it picked redis-cache-pattern over cache-strategy-invalidation-expert, the answer is right there.

Why this matters

The marketing pitch for WinDAGs is "your agent becomes an expert." That pitch is only true if the matcher actually picks the expert who fits the task. A 503-skill catalog is worse than a 10-skill catalog if the matcher can't tell them apart — you've got 503 chances to grab a near-miss.

Every stage in this cascade earns its place by adding a kind of signal the previous stages can't see. BM25 sees vocabulary. Tool2Vec sees usage semantics. RRF sees agreement. The cross-encoder sees joint context. Attribution sees outcomes.

Together they're enough to make picking 1 of 503 a tractable problem. The cascade is what gets the agent's first response to look like the one a senior engineer would have written, instead of the textbook answer.

Get it

One install, no API keys, no commands:

claude plugin marketplace add curiositech/windags-skills

claude plugin install windags-skills

That gives you the /next-move slash skill and the windags_skill_search MCP tool. Stage 1 (BM25 + Porter + bigrams over 503 skills) ships and works the moment the plugin install finishes — instantly, with zero configuration.

The remaining stages are designed to require zero work from you:

- Tool2Vec cache — pre-built once per catalog release and bundled with the plugin. No Haiku key on your end, no five-minute generation step. You get it free on

claude plugin update. - Cross-encoder model (80 MB MiniLM) — distributed two ways so you don't have to think about it: the plugin postinstall hook downloads the ONNX weights into

~/.windags/models/, OR if you'd rather keep installs offline,brew install curiositech/windags/cross-encoderdrops a signed, notarized bundle in place. Either path, no manual setup. - Attribution k-NN — populates automatically as you use

/next-moveand accept/reject predictions. Stored in~/.windags/skill-state.db. Nothing to enable.

If a stage's data isn't present yet (network blocked, fresh machine), the cascade gracefully falls back. Stage 1 alone is already enough to feel useful.