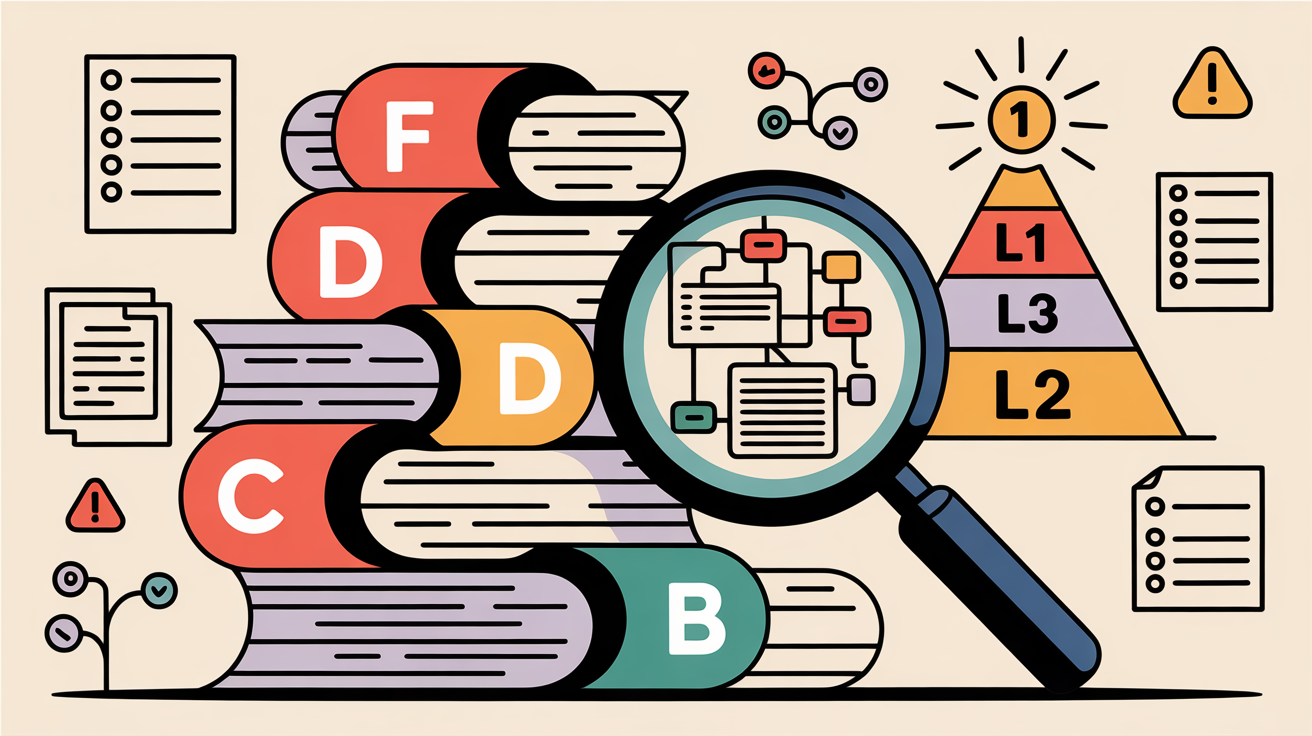

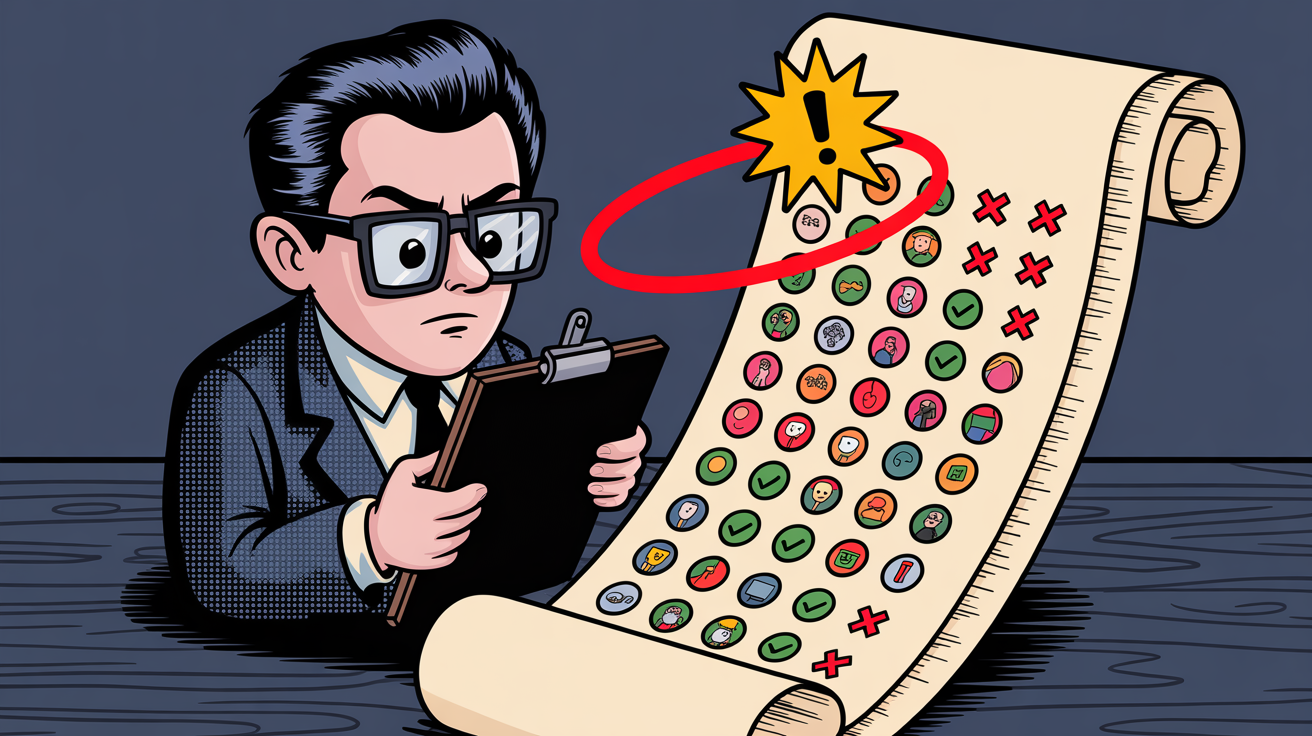

Vanilla Sonnet vs Sonnet + grafted skills: B wins 74%. Structured planning: D wins 88%.

Skills Actually Help: The Numbers

We ran 50 senior-engineer prompts through vanilla Sonnet and Sonnet-with-grafted-skills, then had Opus judge the answers blind. Skill grafting won 74% of decided pairs. Structured planning won 88%. Here's the full study, the methodology, and the categories where it made the biggest difference.

A reasonable question: does any of this matter?