503 skills. Five feedback signals. One side-effect registry. Skills that get sharper as you use them.

Skills That Improve Themselves: Attribution, Lifecycle, and Fleet Agents

Skills aren't static documents. Every graft, every accept/reject, every downstream rating feeds an attribution system that decides which skills to sharpen, deprecate, or split. Here's the SQLite-backed scoring, the side-effect registry, and the fleet of always-on agents that keep 503 skills coherent.

If you've ever rolled out an AI coding tool to your team, you know the half-life problem. The first month, everyone's quoting it in standups. Three months later, someone notices it's confidently giving 2023 React advice on a 2026 codebase, and the trust starts evaporating. Six months later it's the thing nobody admits to using.

The brittleness isn't about the model. The model gets better. It's about the catalog of expertise the model leans on — patterns, library conventions, framework versions, the specific Stripe API change that landed in March. That stuff drifts. And when it drifts quietly, you don't find out until the wrong advice has already shipped.

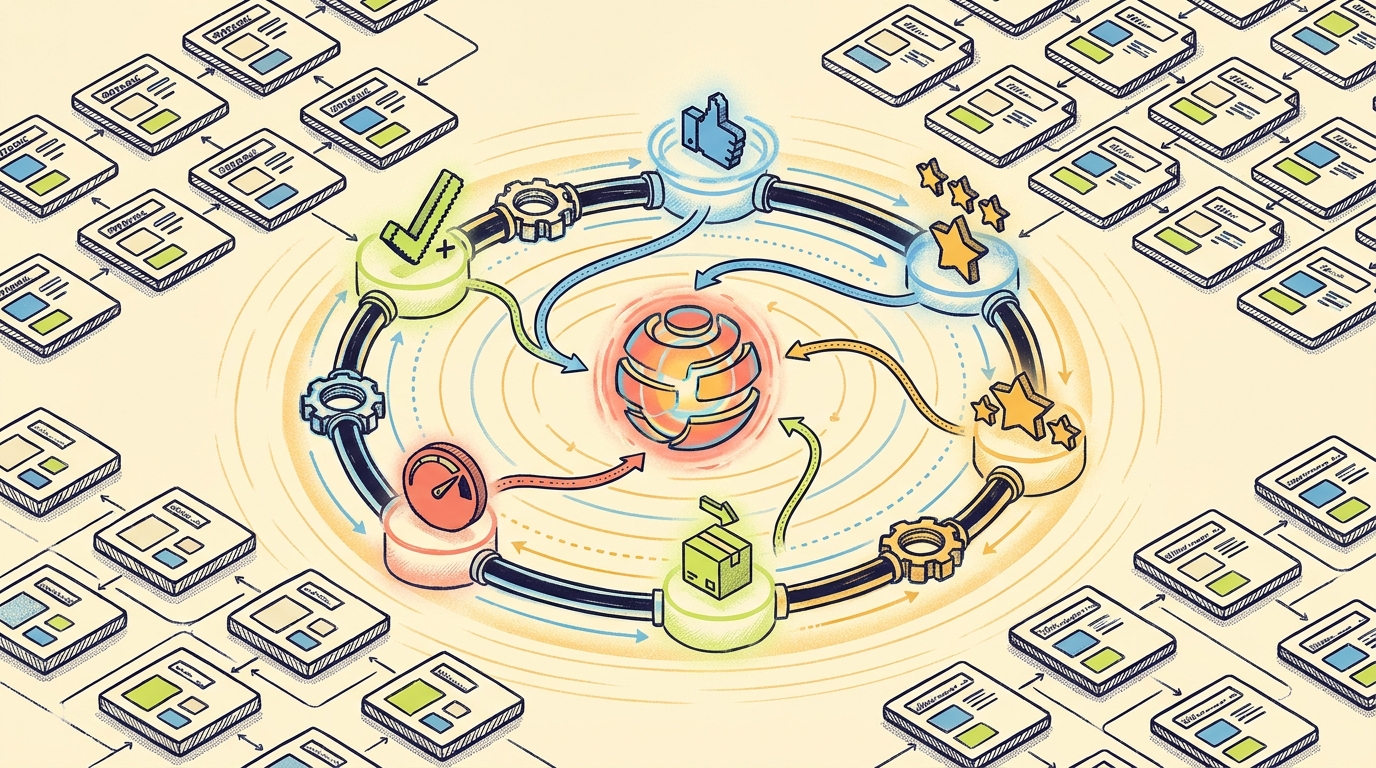

We run 503 hand-written skills against this problem. Scaling that catalog without it rotting is the entire product. So we built a closed loop: every skill graft gets graded, every grade feeds the matcher, and three small background agents run continuously to flag the skills that are starting to lie.

This post is the engineering of that loop, in three parts: how we capture signal (the attribution system), how we propagate change (the lifecycle side-effect registry), and what runs in the background (the fleet agents).

Step 1: capture signal

The matcher we wrote about last week ends with an attribution k-NN stage. That stage is only as good as its data. The data comes from five orthogonal signals captured every time a skill gets used.

| Signal | What it measures | Source |

|---|---|---|

| Completion | Did the agent finish the node it was assigned? | DAG executor lifecycle |

| User acceptance | Did the human accept the predicted plan? | /next-move accept/modify/reject |

| Downstream rating | Did the next node rate this node's output highly enough to use it? | Node-to-node evaluation hooks |

| Self-confidence | Did the agent self-report high confidence on its own work? | Confidence prompt block in skill node executor |

| Shipped | Did the work get committed, deployed, or otherwise leave the repo? | Git observation hooks |

These are stored in ~/.windags/skill-state.db, SQLite, keyed by (skill_id, query_embedding, timestamp). We deliberately keep them separate. The temptation is to collapse them into a single 0–1 quality score for each skill. That collapse hides the most important information.

Here's the kind of pattern we'd lose:

redis-cache-patternhas perfect completion (1.0), high self-confidence (0.92), and excellent downstream ratings (0.88). But user acceptance is only 0.31 and shipped rate is 0.18.

Translation: the skill produces convincing-looking output that the next node is happy to consume, but humans reject it and don't ship it. That's a plausible-but-wrong failure mode — way more dangerous than a skill that fails loudly. We'd never see it in a single number. The first time we caught one of these in the wild via the signal split, we just stared at the dashboard for a minute. The skill looked great by every machine metric. Humans were quietly walking away from it. That asymmetry is the whole reason we keep the signals separate.

Each signal is also tied to a context vector — the embedding of the task that triggered the graft. So we can ask: does this skill perform well on tasks like this one? That's how attribution k-NN re-ranks the cascade.

Step 2: side-effect registry

When a skill changes, fifteen things have to happen. Here's the partial list:

| # | Side effect |

|---|---|

| 1 | Regenerate Tool2Vec embeddings (Haiku call, embed, average, cache) |

| 2 | Invalidate the BM25 index |

| 3 | Update the content hash in skill_identity |

| 4 | Generate hero image for the marketing site |

| 5 | Generate skill art (the catalog tile) |

| 6 | Update the skills.json catalog used by the marketing site |

| 7 | Update the per-skill JSON (one file per skill) |

| 8 | Copy to ~/.claude/skills/ for native Claude Code use |

| 9 | Copy to the windags-skills plugin distribution |

| 10 | Run the L3 structural audit |

| 11 | Update the pairs-with graph in the attribution DB |

| 12 | Refresh blog data if any post references this skill |

| 13 | Re-run the affordance scorecard |

| 14 | Bump the skill changelog |

| 15 | Notify the gap detector to recheck coverage |

When skills change manually, half of these get forgotten. The result is a marketing site that lists a deprecated skill, an embedding cache that points to a stale content hash, and a plugin distribution that's three weeks behind the local catalog.

The registry is the fix. Every side effect is a function with a declared trigger. The trigger is a property of the change: content_hash_changed, description_changed, pairs_with_changed, created, deleted, renamed.

When a skill changes, we compute the change set, look up the side effects whose triggers fire, and run them in dependency order. Twelve of the fifteen are pure functions of the file system; three (image generation, plugin push) are external and idempotent.

The registry is in packages/core/src/lifecycle/. There's a 700-line table at the top of SKILL-LIFECYCLE.md that lists every side effect, every trigger, every location it touches. That table is the source of truth.

Step 3: fleet agents

The registry handles changes. Some things aren't changes — they're conditions that need to be checked continuously. That's what fleet agents are for.

Fleet agents are small background processes that wake on a schedule (or an event), do one bounded thing, and sleep. They're shell scripts today, declared per-project as cron-like entries; the YAML schema for declarative fleet agents is the next step.

Three matter for skill quality:

The gap detector

Reads the last 200 grafts. For each, asks: did any skill in the catalog actually match well, or did the cascade have to settle for a mediocre best-effort? If best-effort is happening more than 5% of the time in some semantic neighborhood, it flags a gap — a region of task-space the catalog doesn't cover. The gap report lands in docs/SKILL_GAP_ANALYSIS.md and gets reviewed weekly.

This is how webapp-paywall-implementation got created. The gap detector noticed several weeks of "subscription gating" and "feature-flag paywall" tasks that were getting marginal matches.

The paradigm monitor

Skills age. Frameworks change. A 2024 React skill that confidently recommends class components is now actively wrong. The paradigm monitor reads each skill's references and SKILL.md against a small set of "epoch markers" — when libraries had major paradigm shifts — and flags skills that look stuck in a previous era.

It's conservative on purpose. False positives are cheap (a skill gets reviewed) but false negatives are expensive (an agent gives outdated advice with confidence). When the monitor is wrong, it's wrong toward "double-check this," not "ship it as-is."

The evaluator

Runs the L3 structural audit on every skill that hasn't been audited in 30 days. Scores five elements (decision triggers, decision flow, anti-patterns, references, quality gates) and writes a report. Skills that drop below threshold get queued for the upgrade pipeline we described earlier.

These three agents run continuously. They cost cents per day. The result: a catalog that doesn't quietly rot.

What this looks like in practice

Last month, this loop produced a real lifecycle event. Here's the timeline:

- Day 0. Five users in a row run

/next-movefor tasks like "set up Stripe webhooks for subscription events." The cascade pickswebapp-paywall-implementation. None of them accept the plan. - Day 1. Attribution k-NN demotes

webapp-paywall-implementationfor that semantic neighborhood. The cascade starts pickingwebapp-payment-integrationinstead. - Day 7. Gap detector reports: 14 grafts in 7 days landed on a skill that's now demoted, with no good replacement. Flags it.

- Day 9. Paradigm monitor independently flags

webapp-paywall-implementation— its references are pre-2025 Stripe API. - Day 12. Curator (a human in this case, will be an agent eventually) reviews. Splits the skill in two:

stripe-subscription-webhooks(modern, focused) andwebapp-paywall-implementation(legacy, marked for retire). Both run through the L3 upgrade pipeline. - Day 12. Side-effect registry fires: regenerate embeddings, regenerate hero images, copy to distribution, update the marketing site, update the plugin.

- Day 13. Cascade now picks

stripe-subscription-webhooksfor the original tasks. User acceptance is back up.

Total elapsed time of human attention: maybe 30 minutes on day 12. Everything else was automated. Watching that play out in real time — five separate signals flagging the same skill from five different angles, the registry firing fifteen side effects in dependency order, the cascade self-correcting without anyone touching it — was the closest thing we've had to a "the system is alive" moment on this project.

What this is not

It's tempting to read all this as "the skills are using machine learning to update themselves." That's not what's happening, and the distinction matters.

- We're not auto-rewriting skill bodies. Skills are still authored by humans (or the curator agent under human review). The system flags what to rewrite and fires the side effects after a rewrite. The text itself is human work.

- We're not running an RL policy. Attribution k-NN is a re-ranking prior, not a policy. There's no exploration term, no regret bound, no trained network.

- We're not treating skills as bandit arms. We tried; it didn't work. Skills are documents in a retrieval index. The right framing is search relevance, not multi-armed bandits.

What it is: a feedback loop with five separated signals, a typed side-effect registry, and three small background agents. None of those pieces is novel in isolation. The work is in making them coherent — same skill identity model, same triggers, same data store.

Where this is going

Three things are next.

The --share flag. Right now everything is local. We want an opt-in telemetry pipeline so we can aggregate signal across users and find skill weaknesses faster. The privacy model has to be right before we ship that. (Hashing query embeddings before upload, never the original task text, no user IDs, etc.)

Declarative fleet agents. Today the fleet is shell scripts. The YAML schema in ADR-0019 lets project owners declare agents — "every commit, run the gap detector"; "every Tuesday, run the paradigm monitor against React skills" — without writing scripts.

The curator agent. The day 12 step in the timeline above is currently a human. We have a windags-curator skill ready to run that step. Not enabling it until we trust the gap detector and paradigm monitor enough that we'd accept their outputs without manual review.

Try it

Every WinDAGs install gets the local attribution DB and the side-effect registry by default. To see the data:

sqlite3 ~/.windags/skill-state.db

.schema skill_observations

SELECT skill_id, COUNT(*) FROM skill_observations GROUP BY skill_id ORDER BY 2 DESC LIMIT 10;

To wire up the MCP and start contributing to your own attribution data:

claude mcp add windags -- npx -y @workgroup-ai/mcp-server

Then run anything that grafts a skill. The signals start flowing.

The matcher gets sharper. The catalog gets healthier. That's the point.